May 11, 2026

PIM Software Is Just an Empty Box (Unless You Fix This First)

Chris Johnson

CEO

Every PIM vendor shows you the same demo. A clean dashboard. Perfectly structured product records. Attribute fields already filled in. Images tagged and organized. A single source of truth that looks like it arrived that way.

What they skip is the six months of human labor behind that demo environment. The team of three data operations people who keep it looking that way. The 47-column manufacturer CSV sitting in someone's downloads folder that nobody has gotten around to mapping yet.

PIM software is a container. It only becomes useful once you figure out how to fill it.

What PIM Software Actually Does

Product information management software is a centralized database for everything that describes your products: names, descriptions, technical specifications, images, pricing, categorization, localization, compliance data, channel-specific content. Instead of that data living in twelve different spreadsheets across three departments, a PIM gives it a single home.

That framing is accurate and genuinely useful. A well-run PIM does eliminate the nightmare of five people maintaining five versions of the same product catalog. It makes multichannel publishing less painful. It creates audit trails, approval workflows, and governance structures that spreadsheets simply cannot.

But the PIM doesn't do any of that automatically. It creates the conditions for it. The software is the filing cabinet. Someone still has to file.

A better analogy: buying PIM software is like buying a CRM. The CRM doesn't know your customers. It doesn't know your deal history. It doesn't know your pipeline. You build all of that. The software gives you a structured place to put it, and some tools for working with it once it's there.

The Promise vs. The Reality

The vendor pitch for product information management software usually centers on three outcomes: a single source of truth, faster time-to-market, and reduced errors across channels. Those outcomes are real, when they happen. What it takes to get there is the part the sales deck leaves out.

Most retailers who have been through a PIM implementation say the same thing. The software worked exactly as advertised. The problem was everything the advertising left out.

According to a Plytix survey on PIM adoption, one-third of companies still depend heavily on spreadsheets to manage product records, and nearly half (46%) still depend on them somewhat, even among organizations that have already implemented a PIM system.

That number isn't evidence that PIM failed those organizations. It's evidence that PIM didn't replace the underlying work. It just gave that work a better home. The spreadsheets persist because someone still has to download the manufacturer's file, clean it, and get it ready before it can live in the PIM.

The core issue is that PIM vendors have conflated two separate problems. The first is governance: where does product data live, who owns it, how do you track changes, how do you push updates to channels. PIM software solves that. The second is enrichment and ingestion: where does the raw data come from, how does it get standardized, who fills in the gaps when a manufacturer sends you incomplete information. PIM software assumes you've already solved that. Most retailers discover this assumption the hard way, after the contract is signed.

You Bought the Container. Now Fill It.

The software ships empty. Every product record needs to be created, enriched, and maintained. For a retailer with a 10,000 SKU catalog across 120 suppliers, that is not an onboarding project. It is a permanent operational function.

Placing one SKU manually on a web store requires 20 to 46 minutes of your time (Scallium Pro, via Plytix).

Do that math at scale. A 10,000 SKU catalog, even at the low end of that range, is over 3,300 hours of data entry just to populate the system initially. That is before you have normalized a single attribute. Before you have checked whether any of it is accurate. Before the first manufacturer sends their quarterly update and you have to reconcile the changes.

This is the work that makes or breaks PIM ROI. And it is the work almost no vendor discusses at the point of sale.

The People You Forgot to Budget For

When retailers scope a PIM implementation, the typical budget has two line items: the software license and the implementation project. A third-party team comes in, migrates the data, configures the workflows, and hands over the keys. Then the project is "done."

Highly skilled knowledge workers dedicate approximately 19% of their total time to searching for data, and data engineers spend up to 40% of their time cleaning and preparing files (Integrate.io, 2024), even in organizations that have invested heavily in data infrastructure.

What does not make it into the project budget is the ongoing data operations function. Someone has to download supplier files every week or every month. Someone has to normalize those files: mapping supplier attribute names to your internal taxonomy, filling in the fields suppliers did not include, flagging records that fail your completeness thresholds. Someone has to push updates through the approval workflow and out to your channels. For a mid-size retailer, that is a 2 to 3 person team. For a large one, it is a dedicated department.

This is the hidden labor cost that makes PIM ROI math fall apart in year two.

The Manufacturer CSV Problem No One Talks About

Here is the part of the PIM conversation that vendors actively avoid.

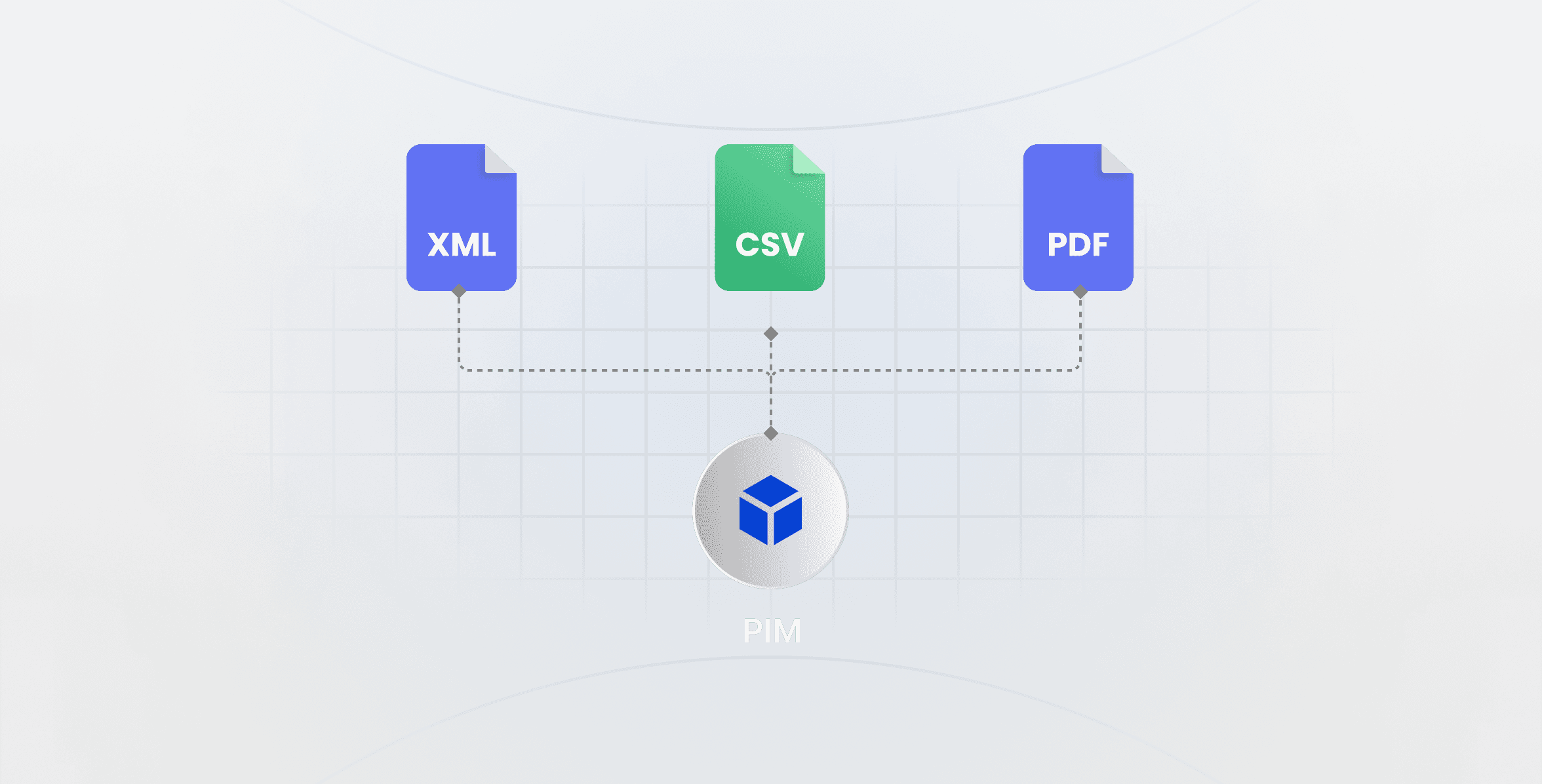

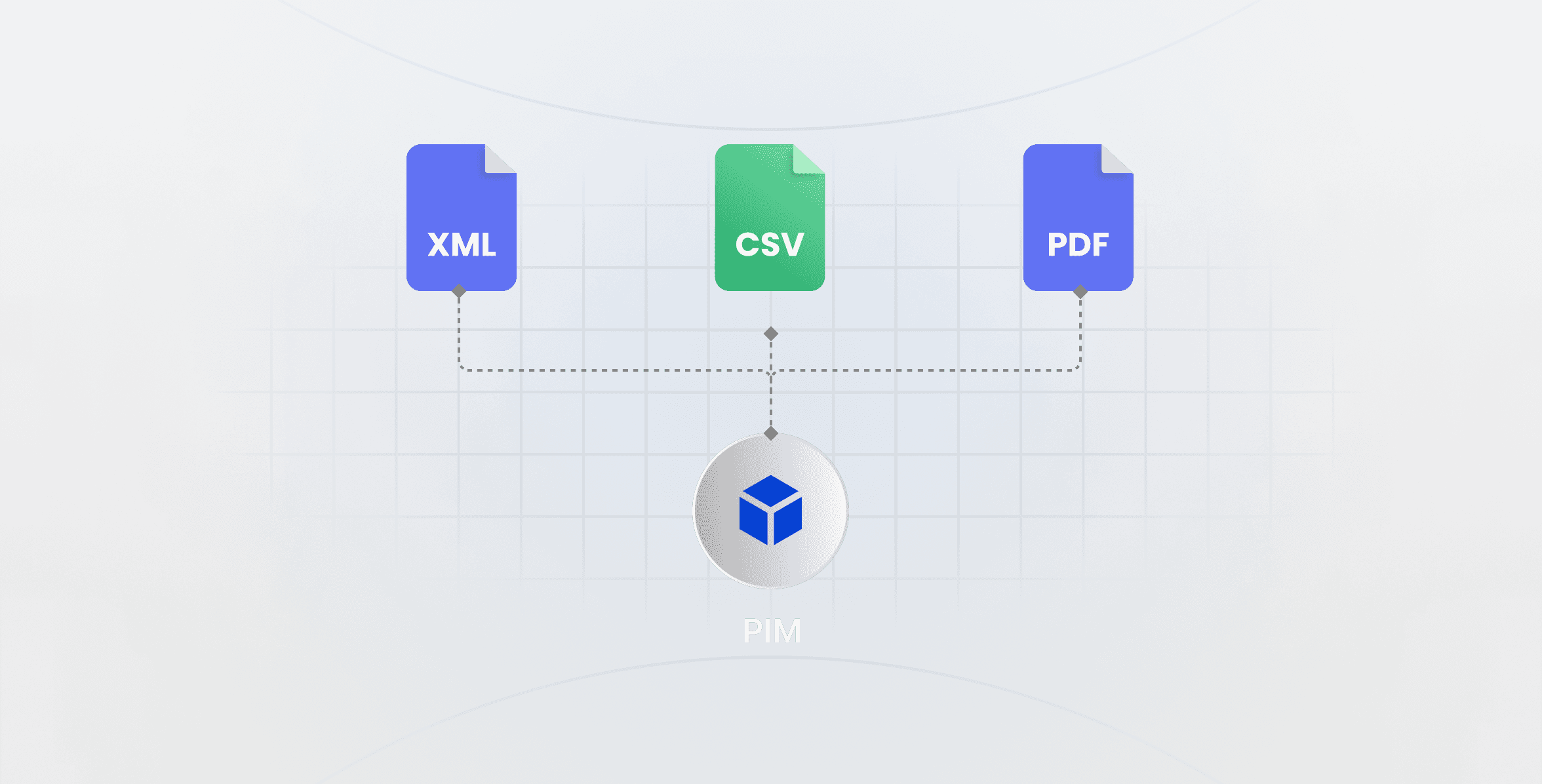

Your manufacturers do not agree on anything. Bosch sends a 47-column CSV with attribute names written in German engineering shorthand. GE sends an XML schema that has not been updated since 2019. LG sends a PDF spec sheet with no structured data at all, just a table in a document that someone has to manually transcribe. Samsung sends four different files depending on product category, each with different column structures and naming conventions.

A mid-size appliance retailer managing 120 supplier relationships receives 120 different data formats. Some arrive weekly. Some arrive monthly. Some arrive only when the manufacturer decides to send them. None of them arrive in the format your PIM expects.

The PIM has nothing to say about this problem. It waits on the other side of the normalization process. The actual work, receiving, parsing, mapping, and cleaning those supplier feeds, happens entirely outside the software, in spreadsheets, Python scripts, or brute-force manual effort. Tools built specifically to solve the ingestion problem, pulling structured SKU-level data directly from manufacturer sources in a normalized format, can eliminate most of this work before it ever reaches your PIM.

This is the structural flaw in the standard PIM pitch. Selling a retailer "centralized product data management" without addressing where that data comes from and how it gets standardized is like selling a restaurant "organized inventory management" without mentioning that someone still has to receive and unpack every delivery.

Attribute Mapping Is a Full-Time Job

Take one attribute across three appliance manufacturers: refrigerator storage capacity. One manufacturer calls it "storage capacity (cu ft)." Another calls it "net volume (liters)." A third buries it in a "specifications" field as a string value: "18.6 cu. ft. total capacity."

Before that attribute can live in your PIM under a consistent field name, someone has to identify it, extract it, convert units if necessary, and map it. Then do the same thing for every other attribute across every product across every supplier. And when any manufacturer updates their data model, which happens every time they launch a new product line, the mapping work starts again.

At 120 suppliers and quarterly catalog updates, this is a dedicated role. Not a quarterly project. A role.

Factor in data quality failures and it gets worse. When a supplier sends an updated CSV with a renamed column, or changes their attribute schema without warning, your entire mapping breaks. Records stop populating. Fields go blank. Products go live with missing specifications. 40% of consumers say they have returned a product because pre-purchase product information turned out to be incorrect (360 Magazine, 2025). Product returns from data errors are expensive, and they erode the trust customers have in your catalog.

Attribute mapping is not a technical problem that better PIM software will eventually solve. It is a structural reality of working with manufacturers who have no incentive to standardize their outbound data formats for your convenience.

What PIM Software Looks Like When It Actually Works

PIM software, when deployed correctly, does deliver the outcomes it promises. The retailers who get real ROI are not the ones who bought the best software. They are the ones who solved the ingestion layer first.

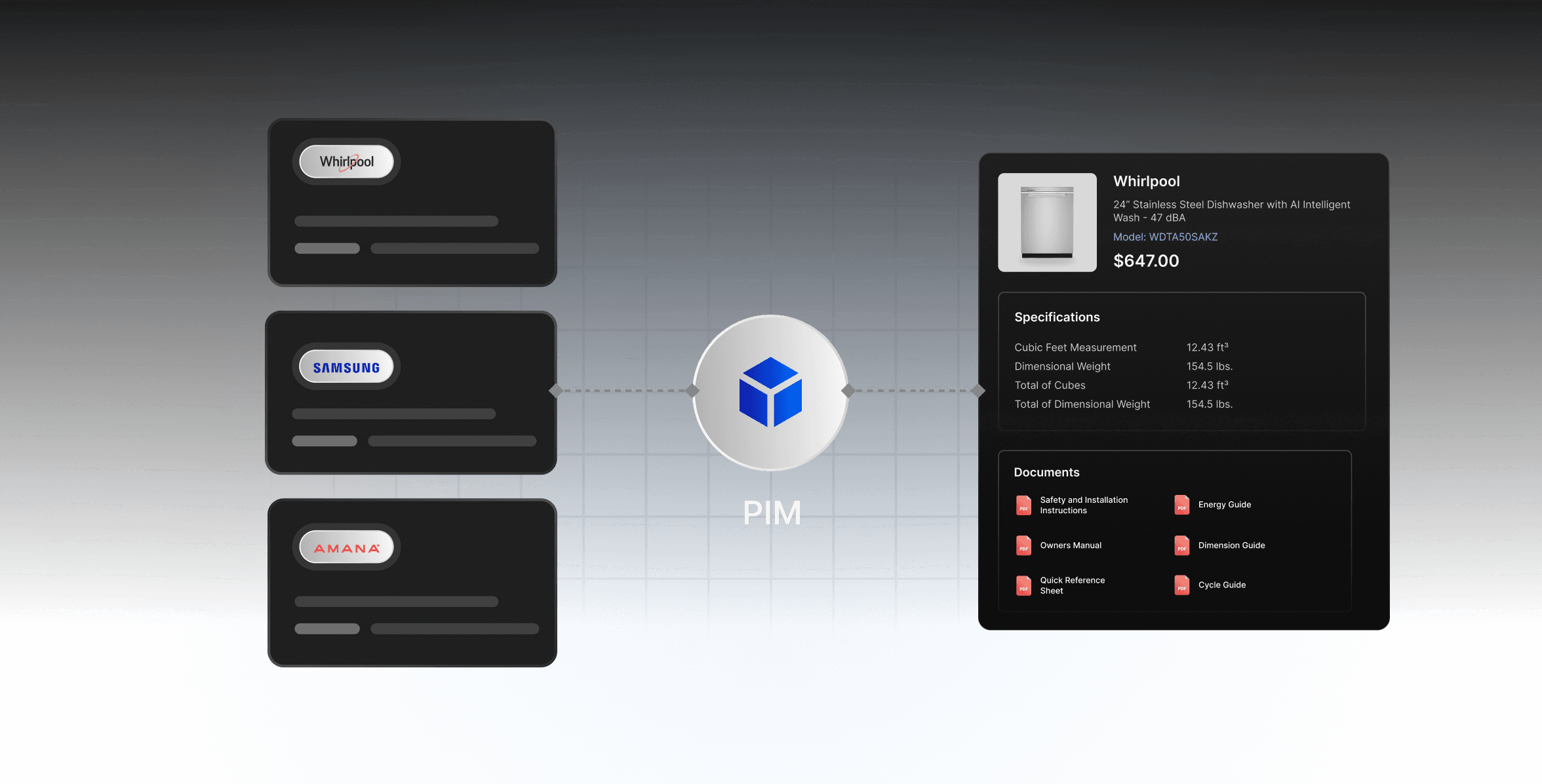

The pattern looks like this. The retailer establishes supplier data standards: required attributes, preferred formats, naming conventions. They build or buy connectors that pull supplier files automatically on a schedule. They run normalization logic that maps incoming attributes to the internal taxonomy, flags exceptions, and routes incomplete records to an enrichment queue. The PIM then does what it is good at: storing, organizing, managing workflows, and syndicating to channels.

This upstream automation layer is what successful implementations have in common. Some retailers build it internally with a data engineering team. Others buy purpose-built feed management tooling. A few work with suppliers directly to standardize their outbound formats over time. The specific approach matters less than the principle: you need a reliable, automated pipeline between your suppliers and your PIM before the PIM can do its job. Skulytics, for example, provides a real-time product data API that delivers pre-normalized SKU-level data, so instead of your team parsing 120 different manufacturer formats, a single structured feed covers your entire catalog.

When that infrastructure is in place, PIM ecommerce integration becomes straightforward. The PIM feeds your storefront, your marketplaces, your print catalog, and your sales tools from a single clean source. Time-to-market compresses. Errors drop. The single source of truth materializes.

But that infrastructure is not the PIM. The PIM is the last step.

Key insight: The retailers who get real ROI from PIM software aren't the ones who bought the best platform. They're the ones who solved the data ingestion problem before they bought anything.

How to Evaluate PIM Software Without Getting Burned

If you are currently researching PIM software reviews or making a shortlist, the standard evaluation criteria matter: integrations, scalability, user interface, support. But they miss the question that will determine whether your implementation succeeds.

Ask every vendor: how does data get into your system? Not theoretically. Not "we support CSV import and API connections." Walk me through, specifically, how my team will get data from a manufacturer who sends a PDF spec sheet into a structured product record in your PIM.

The answer tells you everything. If the vendor pivots immediately to enrichment workflows, DAM integration, AI description generators, they are showing you features that are only useful after the data is already clean and structured. They are not answering the question.

Other criteria that separate real implementations from expensive shelfware:

Pilot with your messiest supplier data, not the clean sample files the vendor provides. Any PIM looks good with clean data.

Get a realistic headcount estimate for ongoing data operations from a customer in a similar segment, not from the vendor.

Check whether the vendor has pre-built connectors for your specific suppliers, or whether "connector" means a CSV import template.

Ask what percentage of their customers' initial implementation timelines go to data migration and cleaning versus software configuration.

If those questions feel uncomfortable to ask, that is useful information too.

The Real Question Is What Feeds Your PIM

The PIM software decision is less important than it looks. Most enterprise PIM platforms are genuinely capable. Akeneo, Pimcore, Plytix, Syndigo: all of them can store your product data, run your enrichment workflows, and push to your channels. The software is not the differentiator.

What differentiates a successful product data strategy from an expensive failed one is the answer to a single question: how will product data get into your system, and who will keep it current?

If the answer is "we will figure that out after we buy the PIM," you are going to spend the next two years paying a license fee for a sophisticated filing cabinet that nobody has time to maintain properly. The data operations function does not disappear because you bought new software. It becomes more conspicuous, because now you have a system with empty fields glaring at you instead of spreadsheets that nobody checks.

If the answer is "we are building an automated ingestion layer that normalizes supplier feeds before they hit the PIM, and we are staffing an ongoing data operations function," you are going to get the outcomes the vendor promised. The PIM will earn its license fee many times over. The single source of truth will be real.

The retailers who discover this the hard way usually circle back to the ingestion problem in year two, after the initial implementation enthusiasm has worn off and the team is quietly maintaining three parallel spreadsheets again. The retailers who figure it out on the front end skip that painful chapter entirely.

Start with the data problem. The software choice follows from there.